They copied several thousand documents and published the data on the dark web.

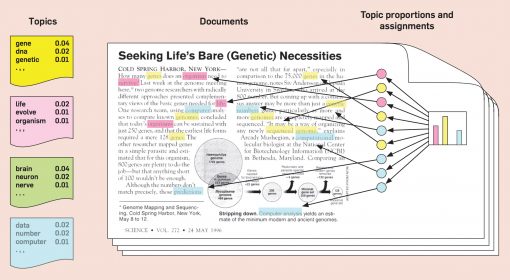

While numerically, the topic where user has a higher-probability (from model.Hackers recently attacked an international company.The probabilities do not add up to 1.0, why?.The two probabilities add up to 1.0, and the topic where user has a higher-probability (from model.show_topics()) has also the higher probability assigned.īut for get_term_topics, there are questions: # get_term_topics: įor get_document_topics, the output makes sense. Print "get_term_topics: ", model.get_term_topics("user", minimum_probability=0.000001) Print "get_document_topics", model.get_document_topics(bow) # get_document_topics for a document with a single token 'user' Model = (corpus=corpus, id2word=dictionary, num_topics=2, # build the corpus, dict and train the modelĬorpus = The ldamodel in gensim has the two methods: get_document_topics and get_term_topics.ĭespite their use in this gensim tutorial notebook, I do not fully understand how to interpret the output of get_term_topics and created the self-contained code below to show what I mean: from gensim import corpora, models get_document_topics: returns the topic distribution for the given document.get_term_topics: returns the most relevant topics to the given word.Get_document_topics(bow, minimum_probability=None, minimum_phi_value=None, per_word_topics=False)Īs for the use of each function, as stated by the documentation: Here is the signature of the functions: get_term_topics(word_id, minimum_probability=None)

If you don't set this option the probabilities won't sum to 1, because low frequencies will be discarded. So, you can label the document based on the topic distribution you get using get_document_topics and you can determine the importance of the word based on the contribution given by get_term_topics.īe careful that both functions have a minimum_probability optional argument. T = lda.get_term_topics("ierr", minimum_probability=0.000001) and the result is which is nothing but the word contribution for determining each topic, which makes sense. The document clearly states that it returns topic distribution for the given document bow, as a list of (topic_id, topic_probability) 2-tuples.įurther, I tried to get_term_topics for keyword "ierr" To answer your first question, the probabilities do add up to 1.0 for a document and that is what get_document_topics does. I did create two topics let say topic1 and topic2. I was working on LDA Topic Modeling and came across this post.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed